Most AI systems today follow the same basic contract. You give it something, it gives you something back. The loop is clean, predictable, and quietly at odds with how people actually think.

This piece does not argue that current AI is wrong. It asks whether a different question is worth pursuing. One that shifts the focus from capability to understanding, from doing more to knowing deeper.

The Linear Problem

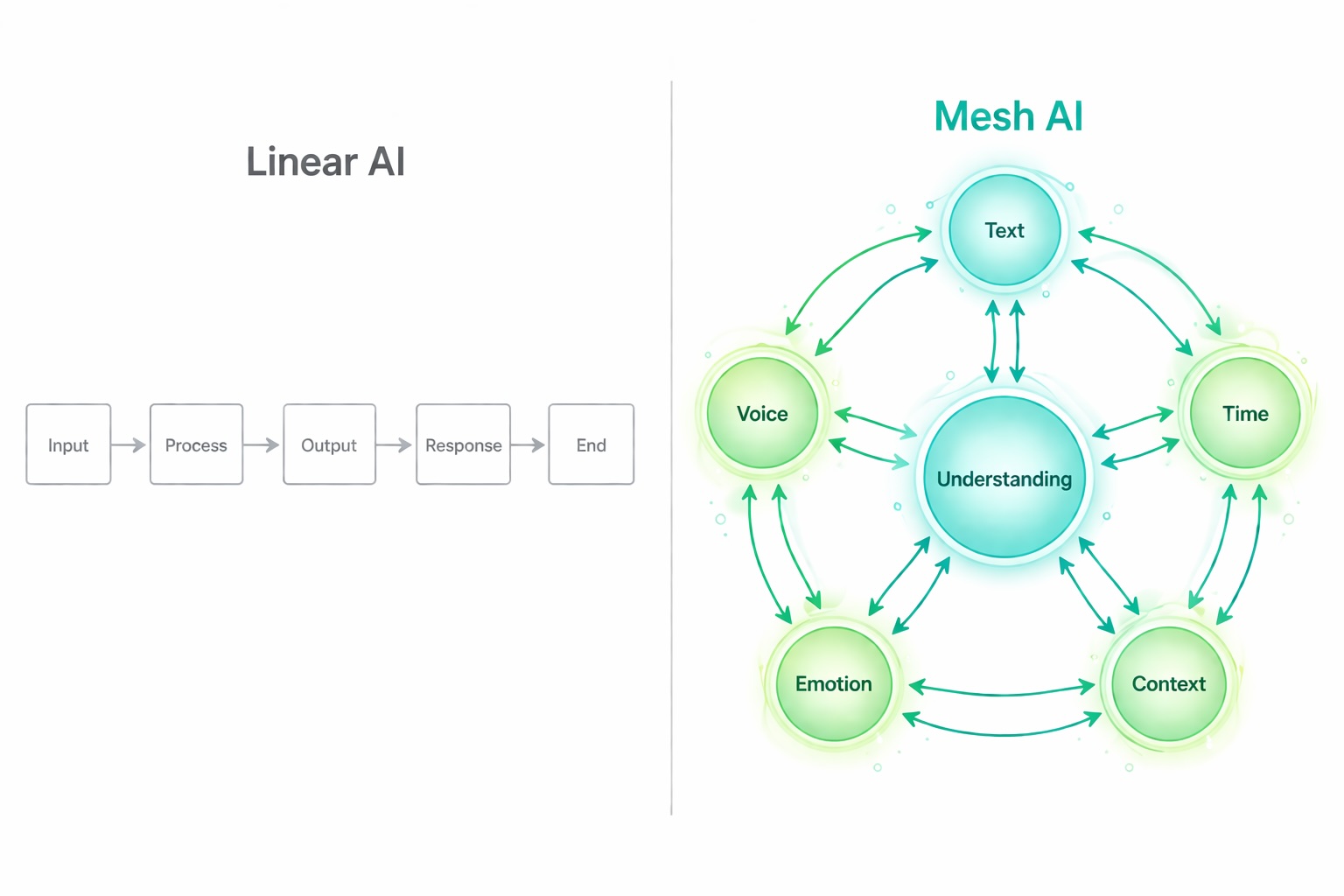

The dominant architecture of modern AI reflects not human cognition, but how society has trained people to communicate. One thing at a time, sequentially, explicitly. We learned to flatten ourselves to fit forms, interfaces, and systems built for single inputs. AI inherited that constraint and called it design.

But human cognition is not a pipeline. It is closer to a network: simultaneous, associative, deeply contextual.

A decision made at 2am carries the weight of that morning's argument, last week's failure, a half-remembered conversation from years ago, and the feeling in the room right now. None of these arrive as discrete inputs. They are layers, emotional and temporal and contextual, that shape each other before any response emerges.

Something is lost every time a feeling gets translated into a sentence, or a pattern gets reduced to a data point. Most AI systems are optimized to process what people say. The harder problem is understanding what they mean, and almost no system is built to hold the full texture of what someone is going through. Context is not just text or voice. It is the layer underneath: the reasons behind the words, the feeling behind the question, the environment that colored everything that day. A person who truly knows you does not need you to explain all of this. They already hold it.

This is what Mesh AI is trying to take seriously.

What Mesh AI Means

Mesh AI is a design philosophy, not a product category. At its core, it proposes that context should be multidirectional: not a single thread of conversation, but a living network of signals. What someone says, how they have been feeling, what they have been working toward, what time it is, what happened yesterday, what they have not said yet.

In a Mesh AI system, no single signal is authoritative. They inform each other. A journal entry shifts the meaning of a question. A pattern of late nights changes how a goal is interpreted. The system does not wait for explicit input to update its understanding. It is continuously building a richer picture, the way a person who knows you well does without being asked.

The question Mesh AI is asking is different from the one most AI development is currently answering. The market is asking how much AI can do. Mesh AI is asking how deeply AI can understand. One is about expanding capability, the other about deepening attention. They are not in conflict, but they are not the same question.

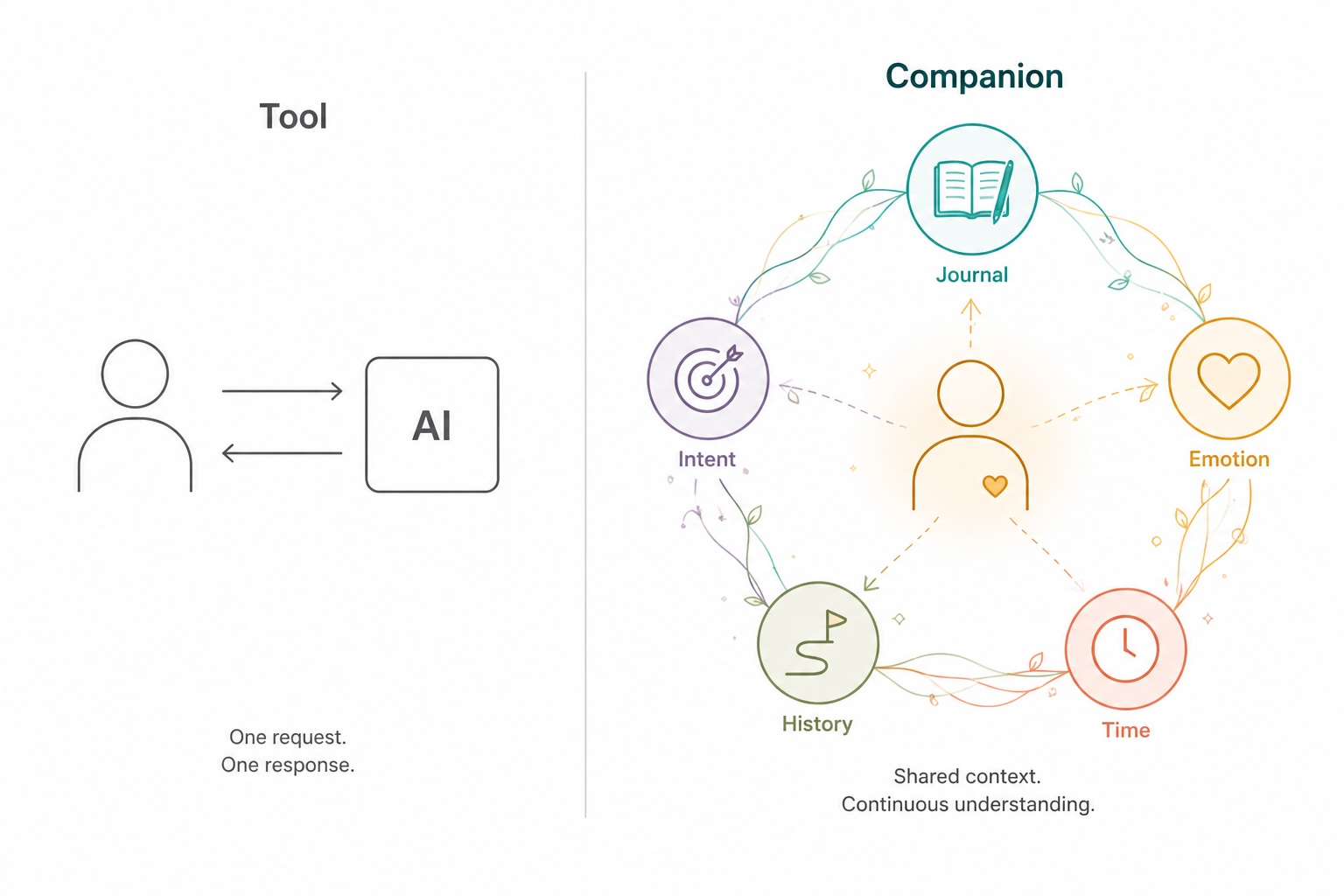

The Companion, Not the Tool

There is a meaningful difference between a tool and a companion. A tool responds to what you give it. A companion holds what you have not said yet.

This distinction matters in any context where continuity is what creates value: navigating a long project, carrying something difficult across days, working through ambiguity without a clear answer. In these moments, a system that only processes explicit input will always fall short. It answers the question you asked, not the one you needed to ask.

A companion built on Mesh AI principles does not need you to explain your context every time. It already knows your patterns, your rhythms, your tendencies under pressure. It has been listening across days, not waiting to be activated.

Offline and User Ownership

The richer the context, the more sensitive the data. A system that holds your emotional patterns, your behavioral rhythms, your intentions and routines knows something about you that most people in your life do not.

This is why Mesh AI, done seriously, has to be local and user-owned. The moment that network of signals leaves your device, it stops being about understanding you and starts being about modeling you for someone else's purposes.

Offline-first, in this framing, is less a technical choice and more an ethical one. Understanding that intimate should not depend on a server someone else controls or a subscription that can be cancelled out from under you. It belongs to the person it is about.

What Understanding Actually Requires

Understanding is not a feature that can be added to a system. It is something that accumulates over time, through repeated exposure to the same person across different states and contexts.

Most AI interactions reset. Each conversation starts from zero. The system does not know that you asked a similar question three weeks ago in a different mood, or that the goal you mentioned today has been sitting unresolved for months. It responds accurately to what is in front of it. But accuracy is not the same as understanding.

What Mesh AI points toward is something closer to how understanding actually develops between people: not through a single exchange, but through repeated contact across time. The journal entry that shifts how a question lands, the pattern of behavior that gives a goal its real meaning, the emotional context that makes the same words mean different things on different days.

This is the direction projects like He@lio and Project Sentinel are already exploring. Not to build smarter systems, but to build systems that stay with a person long enough for understanding to actually develop.

Most AI development optimizes for what can be measured: task completion, response accuracy, speed. Understanding is harder to measure, and presence is harder to ship. But these are the things that make the difference between a system that responds and one that genuinely accompanies.

The gap between a system that responds and one that genuinely accompanies is not a technical gap. It is a human one, and it is still open.

More Research

Quantum Intuition: How AI Can Make Quantum Concepts Accessible to Everyone

April 15, 2026

Quantum computing gets talked about constantly and understood rarely. This article starts from a different angle. Rather than teaching the mathematics, can AI serve as a translator that helps anyone build quantum intuition through language, interaction, and play?

Read ArticleAirlume: Improving School Air Quality Through Service and Data Analysis

September 30, 2024

A white paper that examines how school air quality can be improved through integrated sensing, filtration, and dashboard analytics. The project frames indoor air health as an operational education issue, linking environmental monitoring to student well-being, attendance, and learning outcomes.

Read Full PaperProject Sentinel: A Modular, Offline-First Edge AI Framework for Community Infrastructure Security and Environmental Monitoring

September 3, 2025

A multi-year open research initiative designing, building, and validating a privacy-first, fully offline edge AI platform for under-resourced environments. Project Sentinel investigates whether modular, self-healing AI infrastructure can provide meaningful network security, environmental monitoring, and community resilience — running on low-cost hardware with no cloud dependency.

Read Full Paper